The Co-Processor Architecture: An Embedded System Architecture for Rapid Prototyping

2021-07-06

Editor’s Note — Although well known for its digital processing performance and throughput, the co-processor architecture provides the embedded systems designer opportunities to implement project management strategies, which improve both development costs and time to market. This article, focused specifically upon the combination of a discrete microcontroller (MCU) and a discrete field programmable gate array (FPGA), showcases how this architecture lends itself to an efficient and iterative design process. Leveraging researched sources, empirical findings, and case studies, the benefits of this architecture are explored and exemplary applications are provided. Upon this article’s conclusion, the embedded systems designer will have a better understanding of when and how to implement this versatile hardware architecture.

Introduction

The embedded systems designer finds themselves at a juncture of design constraints, performance expectations, and schedule and budgetary concerns. Indeed, even the contradictions in modern project management buzzwords and phrases further underscore the precarious nature of this role: “fail fast”; “be agile”; “future-proof it”; and “be disruptive!”. The acrobatics involved in even trying to satisfy these expectations can be harrowing, and yet, they have been spoken and continue to be reinforced throughout the market. What is needed is a design approach, which allows for an evolutionary iterative process to be implemented, and just like with most embedded systems, it begins with the hardware architecture.

The co-processor architecture, a hardware architecture known for combining the strengths of both microcontroller unit (MCU) and field programmable gate array (FPGA) technologies, can offer the embedded designer a process capable of meeting even the most demanding requirements, and yet it allows for the flexibility necessary to address both known and unknown challenges. By providing hardware capable of iteratively adapting, the designer can demonstrate progress, hit critical milestones, and take full advantage of the rapid prototyping process.

Within this process are key project milestones, each with their own unique value to add to the development effort. Throughout this article, these will be referred to by the following terms: The Digital Signal Processing with the Microcontroller milestone, the System Management with the Microcontroller milestone, and the Product Deployment milestone.

By the conclusion of this article, it will be demonstrated that a flexible hardware architecture can be better suited to modern embedded systems design than a more rigid approach. Furthermore, this approach can result in improvements to both project cost and time to market. Arguments, provided examples, and case studies will be used to defend this position. By observing the value of each milestone within the design flexibility that this architecture provides, it becomes clear that an adaptive hardware architecture is a powerful driver in pushing embedded systems design forward.

Exploring the strengths of the co-processor architecture: design flexibility and high-performance processing

A common application for FPGA designs is to interface directly with a high-speed analog-to-digital converter (ADC). The signal is digitized, read into the FPGA, and then some digital signal processor (DSP) algorithms are applied to this signal. Last of all, the FPGA then makes decisions based upon the findings.

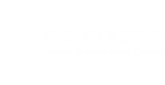

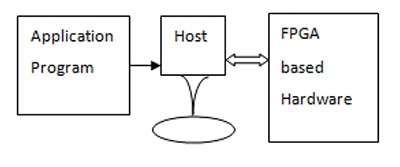

Such an application will serve as the example throughout this article. Furthermore, Figure 1 illustrates a generic co-processor architecture, where the MCU and FPGA are connected through the MCU’s external memory interface. The FPGA is treated as if it were a piece of external static random-access memory (SRAM). Signals come back to the MCU from the FPGA and serve as hardware interrupt lines and status indicators. This allows the FPGA to indicate critical states to the MCU, such as communicating that an ADC conversion is ready, or a fault has occurred, or another noteworthy event has happened.

Figure 1: Generic co-processor diagram (MCU + FPGA). (Image source: CEPD)

Figure 1: Generic co-processor diagram (MCU + FPGA). (Image source: CEPD)

The strengths of the co-processor approach are probably best seen within the deliverables of each of the above-mentioned milestones. Value is assessed by not only listing the accomplishments of a task or phase but also by assessing the enablement that these accomplishments allow. The answers to the following questions assist in assessing the overall value of a milestone’s deliverables:

- Can the progress of other team members now more rapidly continue, as project dependencies and bottlenecks are removed?

- How do the accomplishments of the milestone enable further parallel execution paths?

The digital signal processing with the microcontroller milestone

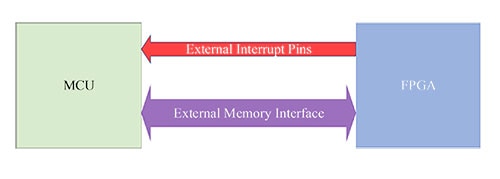

Figure 2: Architecture - digital signal processing with the microcontroller. (Image source: CEPD)

Figure 2: Architecture - digital signal processing with the microcontroller. (Image source: CEPD)

The first development stage that this hardware architecture allows places the MCU front and center. All things being equal, MCU and executable software development is less resource and time-consuming than FPGA and hardware descriptive language (HDL) development. Thus, by initiating product development with the MCU as the primary processor, algorithms can be implemented, tested, and validated more rapidly. This allows algorithmic and logical bugs to be discovered early in the design process, and this also allows for substantial portions of the signal chain to be tested and validated.

The FPGA’s role in this initial milestone is to serve as a high-speed data gathering interface. Its task is to reliably pipe data from the high-speed ADC, alert the MCU that data is available, and present this data on the MCU’s external memory interface. Although this role does not include implementing HDL-based DSP processes or other algorithms, it is nonetheless highly critical.

The FPGA development performed at this phase lays the foundation for the product’s ultimate success both within the product development efforts and upon release to the market. By focusing on just the low-level interface, adequate time can be dedicated to testing these essential operations. Only once the FPGA is reliably and confidently performing this interfacing role, can this milestone be concluded confidently.

Key deliverables from this initial milestone include the following benefits:

- The full signal path - all amplifications, attenuations, and conversions - will have been tested and validated.

- The project development time and effort will have been reduced by initially implementing the algorithms in software (C/C++); this is of considerable value to management and other stakeholders, who must see the feasibility of this project before approving future design phases.

- The lessons learned from implementing the algorithms in C/C++ will be directly transferable to HDL implementations - through the use of software-to-HDL tools, e.g., Xilinx HLS.

The system management with the microcontroller milestone

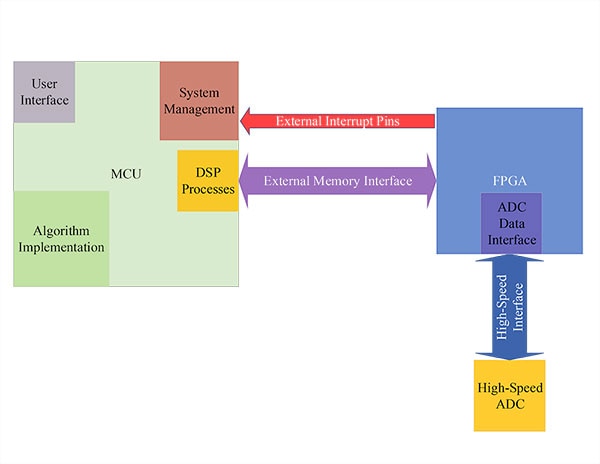

Figure 3: Architecture - system management with the microcontroller. (Image source: CEPD)

Figure 3: Architecture - system management with the microcontroller. (Image source: CEPD)

The second development stage, which this co-processor approach offers, is defined by the moving of DSP processes and algorithm implementations from the MCU to the FPGA. The FPGA is still responsible for the high-speed ADC interface, however, by assuming these other roles, the speed and parallelism offered by the FPGA are fully utilized. Additionally, unlike the MCU, multiple instances of the DSP processes and algorithm channels can be implemented, and run simultaneously.

Built upon the lesson learned from the MCU’s implementation, the designer carries this confidence forward into this next milestone. Tools, such as the aforementioned Vivado HLS from Xilinx, provide a functional translation from the executable C/C++ code to synthesizable HDL. Now, timing constraints, process parameters, and other user preferences must still be defined and implemented, however, the core functionality is persevered and translated to the FPGA fabric.

For this milestone, the MCU’s role is that of a system manager. Status and control registers within the FPGA are monitored, updated, and reported on by the MCU. Furthermore, the MCU manages the user interface (UI). This UI could take the form of the web server accessed over an ethernet or Wi-Fi connection, or it could be an industrial touchscreen interface giving access to users at the point of use. The key takeaway from the MCU’s new, more refined role is this: by being relieved from the computationally-intensive processing tasks, both the MCU and FPGA are now being leveraged in tasks for which they are well suited.

Key deliverables form this milestone and include these benefits:

- Fast, parallel execution of DSP processes and algorithm implementations are being provided by the FPGA.The MCU provides a responsive and streamlined UI and manages the product’s processes.

- Having been first developed and validated within the MCU, algorithmic risks have been mitigated and these mitigations are translated over into synthesizable HDL. Tools, such as Vivado HLS, make this translation an easier process. Furthermore, FPGA-specific risks can be mitigated through integrated simulation tools, such as the Vivado design suite.

- Stakeholders are not exposed to significant risk by moving the processes over to the FPGA. On the contrary, they get to see and enjoy the benefits that the FPGA’s speed and parallelism provide. Measurable performance improvements are observed and focus can now be given to readying this design for manufacturing.

The product deployment milestone

With the computationally-intensive processing being addressed within the FPGA, and the MCU handling its system management and user interface roles, the product is ready for deployment. Now, this paper does not advocate for bypassing Alpha and Beta releases; however, the emphasis for this milestone are the capabilities that the co-processor architecture provides to product deployment.

Both the MCU and FPGA are field updateable devices. Several advancements have been made to make FPGA updates just as accessible as software updates. Moreover, since the FPGA is within the addressable memory space of the MCU, the MCU can serve as the access point for the entire system: receiving both updates for itself as well as for the FPGA. Updates can be conditionally scheduled, distributed, and customized on a per end-user basis. Last of all, user and use-case logs can be maintained and associated with specific build implementations. From these data sets, performance can continue to be refined and enhanced even after the product is in the field.

Perhaps the strengths of this total-system updatability are no more underscored than in space-based applications. Once a product is launched, maintenance and updates must be performed remotely. This could be as simple as changing logical conditions, or as complicated as updating a communications modulation scheme. The programmability offered by FPGA technologies and the co-processor architecture can accommodate the entirety of this range of capabilities, all while offering radiation-hardened component choices.

The final key takeaway from this milestone is progressive cost reduction. Cost reductions, bill of materials (BOM) changes, and other optimizations can also occur at this milestone. During field deployments, it may be discovered that the product can operate just as well with a less expensive MCU, or less capable FPGA. Because of the co-processor, architecture designers are not stuck using components whose capabilities exceed their application’s needs. Furthermore, should a component become unavailable, the architecture allows for new components to be integrated into the design. This is not the case with a single-chip, system on a chip (SoC) architecture, or with a high-performance DSP or MCU that attempts to handle all of the product’s processing. The co-processor architecture is a good mix of capability and flexibility giving the designer more choices and freedoms both with the development phases and upon release to the market.

Supporting research and related case studies

Satellite communications example

In short, the value of a co-processor is to offload the primary processing unit so that tasks are executed upon hardware, in which accelerations and streamlining can be taken advantage of. The advantage of such a design choice is a net increase in computational speed and capabilities, and, as this article argues, a reduction in development cost and development time. Perhaps one of the most compelling realms for these benefits is in the area of space communications systems.

In their publication, FPGA based hardware as coprocessor, G. Prasad and N. Vasantha detail how data processing within an FPGA blends the computational needs of satellite communications systems without the high non-recurring engineering (NRE) costs of application-specific integrated circuits (ASICs) or the application-specific limitations of a hard-architecture processor. Just as was described in the Digital Signal Processing with the Microcontroller Milestone, their design begins with the application processor performing a majority of the computationally intensive algorithms. From this starting point, they identify the key sections of software that consume a majority of the central processing unit (CPU) clock’s cycles and migrate these sections over to HDL implementation. The graphical representation is highly similar to what has been presented so far, however, they have chosen to represent the Application Program as its own independent block, as it can be either realized in the Host (Processor) or in the FPGA based Hardware.

Figure 4: Application program, host processor, and FPGA-based hardware - used in satellite communications example.

Figure 4: Application program, host processor, and FPGA-based hardware - used in satellite communications example.

By utilizing a peripheral component interconnect (PCI) interface and the host processor’s direct memory access(DMA), peripheral performance is dramatically increased. This is mostly observed within the improvements for the Derandomization process. When this process was performed in the host processor’s software, there was clearly a bottleneck in the real-time response of the system. However, when moved to the FPGA, the following benefits were observed:

- The Derandomization process executed in real-time without causing bottlenecks

- The host processor’s computational overhead was significantly reduced, and it could now better perform a desired logging role.

- The total performance of the entire system was scaled up.

All of this was achieved without the costs associated with an ASIC, and while enjoying the flexibility of programmable logic [5]. Satellite communications present considerable challenges, and this approach can verifiably meet these requirements, and continue to provide design flexibility.

Automotive infotainment example

Entertainment systems within automobiles are distinguishing features for discerning consumers. Unlike a majority of automotive electronics, these devices are highly visible and are expected to provide exceptional response time and performance. However, designers are often squeezed between the current needs of the design and the flexibility, which future features will require. For this example, the implementation needs of signal processing and wireless communications will be used to highlight the strengths of the co-processor hardware architecture.

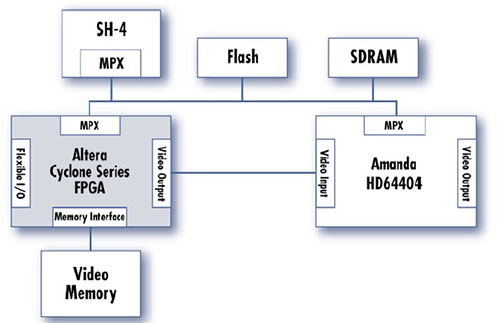

One of the predominant automotive entertainment system architectures used was published by the Delphi Delco Electronics Systems corporation. This architecture employed an SH-4 MCU with a companion ASIC, Hitachi’s HD64404 Amanda peripheral. This architecture satisfied over 75% of the automotive market’s baseline entertainment functionality; however, it lacked the ability to address video processing applications and wireless communications. By including an FPGA within this existing architecture, further flexibility and capability can be added to this already-existing design approach.

Figure 5: Infotainment FPGA co-processor architecture example 1.

Figure 5: Infotainment FPGA co-processor architecture example 1.

The Figure 5 architecture is suitable for both video processing and wireless communications management. By pushing the DSP functionalities to the FPGA, the Amanda processor can serve a system management role and is freed to implement a wireless communications stack. As both the Amanda and FPGA have access to the external memory, data can be rapidly exchanged among the system’s processors and components.

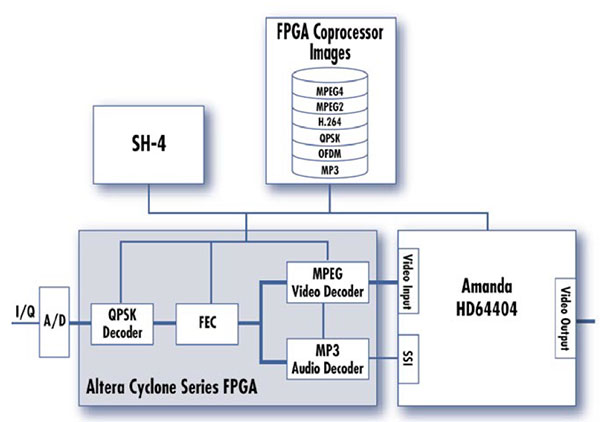

Figure 6: Infotainment FPGA co-processor architecture example 2.

Figure 6: Infotainment FPGA co-processor architecture example 2.

The second infotainment in Figure 6 highlights the FPGA’s ability to address both the incoming high-speed analog data and the handling of the compression and encoding needed for video applications. In fact, all of this functionality can be pushed into the FPGA and through the use of parallel processing, these can all be addressed in real-time.

By including an FPGA within an existing hardware architecture, the proven performance of the existing hardware can be coupled with flexibility and future-proofing. Even within existing systems, the co-processor architecture provides options to designers, which would otherwise not be available [6].

Rapid prototyping advantages

At its heart, the rapid prototyping process strives to cover a substantial amount of product development area by executing tasks in parallel, identifying “bugs” and design issues quickly, and validating data and signal paths, especially those within a project’s critical path. However, for this process to truly produce streamlined, efficient results there must be sufficient expertise in the project areas required.

Traditionally, this means that there must be a hardware engineer, an embedded software or DSP engineer, and an HDL engineer. Now, there are plenty of interdisciplinary professionals, who may be able to satisfy multiple roles; however, there is still substantial project overhead involved in coordinating these efforts.

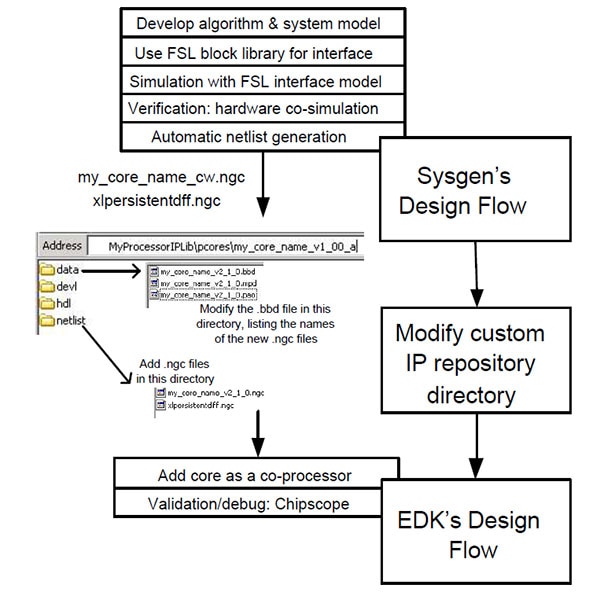

In their paper, An FPGA based rapid prototyping platform for wavelet coprocessors, the authors promote the idea that using a co-processor architecture allows a single DSP engineer to fulfill all of these roles, efficiently and effectively. For this study, the team began designing and simulating the desired DSP functionality within MATLAB’s Simulink tool. This served two primary functions, in that it, 1) verified the desired performance through simulation, and 2) served as a baseline to which future design choices could be compared and referenced.

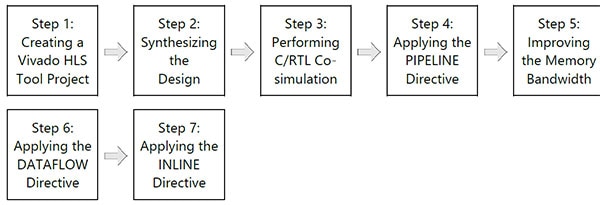

After simulation, critical functionalities were identified and divided into different cores – these are soft-core components and processors that can be synthesized within an FPGA. The most important step during this work was to define the interface among these cores and components and to compare the data-exchange performance against the desired, simulated performance. This design process closely aligned with Xilinx’s design flow for embedded systems and is summarized in Figure 7 below.

Figure 7: Implementation design flow.

Figure 7: Implementation design flow.

By dividing the system into synthesizable cores, the DSP engineer can focus upon the most critical aspects of the signal processing chain. She/he does not need to be an expert in hardware or HDL to modify, route, or implement different soft-core processors or components within the FPGA. So long as the designer is aware of the interface and the formats of the data, they have full control over the signal paths and can refine the system’s performance.

Empirical findings – the discrete cosine transform case study

The empirical findings not only confirmed the flexibility availed by the co-processor architecture to the embedded systems designer, but also showcased the performance-enhancing options available with modern FPGA tools. Enhancements, like the ones mentioned below, may not be available or may have less impact for other hardware architectures. The discrete cosine transform (DCT) was selected as a computationally intensive algorithm, and its progression from a C-based implementation to an HDL-based implementation was at the heart of these findings. DCT was chosen since this algorithm is used in digital signal processing for pattern recognition and filtering [8]. The empirical findings were based upon a laboratory exercise, which was completed by the author and coworkers, to obtain the Xilinx Alliance Partner certification for 2020 - 2021.

The following tools and devices were used in this effort:

- Vivado HLS v2019

- The device for assessment and simulation was the xczu7ev-ffvc1156-2-e

Beginning with the C-based implementation, the DCT algorithm accepts two arrays of 16-bit numbers; array “a” is the input array to the DCT, and array “b” is the output array from the DCT. The data width (DW) is therefore defined as 16, and the number of elements within the arrays (N) is 1024/DW, or 64. Last of all, the size of the DCT matrix (DCT_SIZE) is set to 8, which means an 8 x 8 matrix is used.

Following the premise of this article, the C-based algorithm implementation allows the designer to quickly develop and validate the algorithm’s functionality. Although it is an important consideration, this validation places functionality at a higher weighting than execution time. This weighting is allowed, since the ultimate implementation of this algorithm will be in an FPGA, where hardware acceleration, loop unrolling, and other techniques are readily available.

Figure 8: Xilinx Vivado HLS design flow.

Figure 8: Xilinx Vivado HLS design flow.

Once the DCT code was created within the Vivado HLS tool as a project, the next step is to begin synthesizing the design for FPGA implementation. It is at this next step where some of the most impactful benefits from moving an algorithm’s execution from an MCU to an FPGA become more apparent – as a reference this step is equivalent to the System Management with the Microcontroller milestone discussed above.

Modern FPGA tools allow for a suite of optimizations and enhancements that greatly enhance the performance of complex algorithms. Before analyzing the results, there are some important terms to keep in mind:

- Latency – The number of clock cycles required to execute all iterations of the loop [10]

- Interval – The number of clock cycles before the next iteration of a loop starts to process data [11]

- BRAM – Block Random Access Memory

- DSP48E – Digital Signal Processing Slice for the UltraScale Architecture

- FF – Flipflop

- LUT – Look-up Table

- URAM – Unified Random-Access Memory (can be composed of a single transistor)

|

||||||||||||||||||||||||||||||||||||||||

Table 1: FPGA algorithm execution optimization findings (latency and interval).

|

Table 2: FPGA algorithm execution optimization findings (resource utilization).

Default

The default optimization setting comes from the unaltered result of translating the C-based algorithm to synthesizable HDL. No optimizations are enabled, and this can be used as a performance reference to better understand the other optimizations.

Pipeline inner loop

The PIPELINE directive instructs Vivado HLS to unroll the inner loops so that new data can start being processed while existing data is still in the pipeline. Thus, new data does not have to wait for the existing data to be complete before processing can begin.

Pipeline outer loop

By applying the PIPELINE directive to the outer loop, the outer loop’s operations are now pipelined. However, the inner loops’ operations now occur concurrently. Both the latency and interval time are cut in half through applying this directly to the outer loop.

Array partition

This directive maps the contents of the loops to arrays and thus flattens all of the memory access to single elements within these arrays. By doing this, more RAM is consumed, but again, the execution time of this algorithm is cut in half.

Dataflow

This directive allows the designer to specify the target number of clock cycles between each of the input reads. This directive is only supported for top-level function. Only loops and functions exposed to this level will benefit from this directive.

Inline

The INLINE directive flattens all loops, both inner and outer. Both row and column processes can now execute concurrently. The number of required clock cycles is kept to a minimum, even if this consumes more FPGA resources.

Conclusion

The co-processor hardware architecture provides the embedded designer with a high-performance platform that maintains its design flexibility throughout development and past product release. By first validating algorithms in C or C++, processes, data and signal paths, and critical functionality can be verified in a relatively short amount of time. Then, by translating the processor-intensive algorithms into the co-processor FPGA, the designer can enjoy the benefits of hardware acceleration and a more modular design.

Should parts become obsolete or optimizations be required, the same architecture can allow for these changes. New MCUs and new FPGAs can be fitted into the design, all the while the interfaces can remain relatively untouched. Additionally, since both the MCU and FPGA are field updatable, user-specific changes and optimizations can be applied in the field and remotely.

In closing, this architecture blends the development speed and availability of an MCU with the performance and expandability of an FPGA. With optimizations and performance enhancements available at every development step, the co-processor architecture can meet the needs of even the most challenging requirements – both for today’s designs and beyond.

Disclaimer: The opinions, beliefs, and viewpoints expressed by the various authors and/or forum participants on this website do not necessarily reflect the opinions, beliefs, and viewpoints of DigiKey or official policies of DigiKey.